U.S. AI Boom Could Cause Health Care Costs to Soar Without Changes to Payment Policy

Federal Officials Factor in Health Gains to Control Spending, LDI Fellow Says

In Their Own Words

Cross-posted with permission from Health Affairs Forefront.

[Original Post: Eric Bressman. “AI Prescribing Medications In Utah: A Flawed Regulatory Playbook”, Health Affairs Forefront, April 17, 2026. https://doi.org/10.1377/forefront.20260413.968853 Copyright © 2026 Health Affairs by Project HOPE – The People-to-People Health Foundation, Inc.]

An experiment is taking place in Utah right now that may preview the future of artificial intelligence (AI)-driven medical care. Through its regulatory sandbox, the state has partnered with a company called Doctronic—which markets itself as an AI doctor—to allow its software to autonomously prescribe medication refills; similar pilots are set to follow. We should be wary.

At first glance, this may seem a modest step with potential upside for patients and providers. Refilling stable, chronic medications is certainly lower risk than diagnosing disease or performing surgery. It is also a high-volume, just-burdensome-enough task that is ripe for automation. But in regulatory terms, allowing an AI system to independently prescribe medications is a threshold no one has ever crossed—granting a machine authority that, until now, has required years of education, supervised training, standardized testing, and formal licensure.

That raises two urgent questions. First, does existing law actually authorize this? And second, perhaps more important, is it wise?

Oversight of clinical software has traditionally rested with the Food and Drug Administration (FDA), which regulates AI under its “Software as a Medical Device” framework. This approach is modeled on how the FDA assesses hardware, such as glucose monitors and pacemakers, and is better suited for traditional machine-learning algorithms with narrow uses (for example, a radiology tool that reads computed tomography [CT] scans), in which performance can be measured on a finite set of tasks. It was not designed for the current era of generative AI, in which a single model can perform an array of clinical functions, from interpreting lab results, to dosing medications, to counseling on disease management. This sounds less like a medical device and more like a human practitioner.

The FDA has largely sidestepped the problem, having yet to authorize a single generative AI model. It has managed to do so through a mix of exemption criteria—rooted in provisions of the 21st Century Cures Act, which exclude certain clinical decision support tools from device regulation—and companies working hard to avoid the device designation. But Utah and Doctronic are barreling right in, and according to the FDA’s own guidance documents, this use case meets the definition of a device.

Why hasn’t the FDA stepped in? They haven’t commented publicly on the Utah pilot, and by Doctronic’s account, the company hasn’t communicated with the FDA (although that may change soon). Utah and Doctronic maintain that the authority to prescribe medications falls within the set of laws that regulate the practice of medicine (that is, those associated with medical licensure), which are the states’ jurisdiction. It’s worth noting that there is a bill pending in Congress that would amend the Food, Drug, and Cosmetic Act of 1938 to allow AI systems to legally prescribe medications with state approval, which would suggest that until such a bill is passed, the legal picture is, at minimum, unsettled (the bill hasn’t made it out of committee). With the FDA’s silence, though, the Utah experiment has forged ahead.

Legal issues aside, it is worth considering whether this is the direction we want to take. My colleagues and I have argued elsewhere in favor of adopting a licensure framework for AI, recognizing that generative AI tools function more like human clinicians than they do traditional medical devices. Licensure pairs entry to practice standards—training requirements and competency assessments—with ongoing surveillance and education, an approach better suited to the broad skillset and evolving capabilities of generative AI.

The approach unfolding in Utah presents a haphazard template that recalls the early days of the medical profession, before consensus emerged around what constitutes proper training. Licensure emerged in the late nineteenth century in response to a chaotic marketplace of unevenly trained practitioners and dubious remedies. The 1910 Flexner Report, which surveyed the state of medical schools in North America, exposed wide variation in the quality of education and spurred reforms that standardized training and established uniform expectations for who could enter the profession. While states do grant licenses to practice medicine, every state board relies on a fairly consistent set of pre-professional requirements overseen by national bodies, from the Liaison Committee on Medical Education to the National Board of Medical Examiners.

If AI models are to be licensed in some capacity—whether for supervised or independent practice—we will need a common set of standards that define readiness for practice. That means articulating the competencies AI must demonstrate and developing evaluation methods tailored to the distinctive risks these systems pose. Passing a board-style exam is no longer a meaningful benchmark. Generative models may excel at test questions while still exhibiting confabulation, sycophancy, brittleness, or unpredictable variability in real-world settings. Those risks must be measured before deployment, not discovered after harm occurs.

That will also require a central authority to oversee the process. It is not clear that the FDA intends to play that role. Its silence in the Utah pilot, alongside broader initiatives that favor real-world deployment followed by data collection, suggests a more decentralized and improvised approach. The emerging playbook is one in which companies define their own benchmarks, persuade regulators of their adequacy, and deploy first while promising to study performance later.

This type of patchwork is no substitute for a coherent national framework. Medicine learned this lesson more than a century ago. Standardization emerged not to stifle innovation but to protect patients from uneven training and unproven practitioners. AI will undoubtedly shape the future of medicine, as it will every industry. The difference is that when we move fast and break things, the stakes are human lives.

Federal Officials Factor in Health Gains to Control Spending, LDI Fellow Says

In a First-of-Its-Kind Analysis, Researchers Track Industry Payments Tied to AI Medical Devices and Call for Stronger FDA Oversight, Transparency, and Aid for Underserved Hospitals

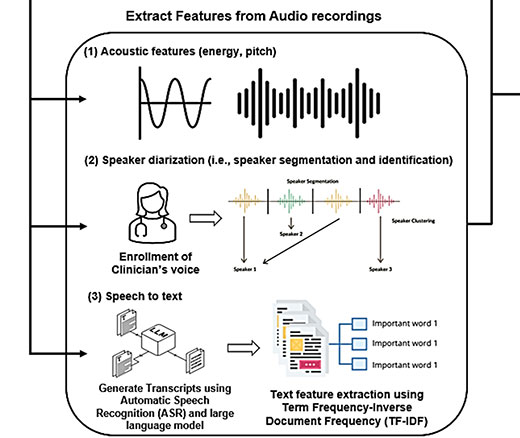

Researchers Use AI to Analyze Patient Phone Calls for Vocal Cues Predicting Palliative Care Acceptance

A Licensure Model May Offer Safer Oversight as Clinical AI Grows More Complex, a Penn LDI Doctor Says

Study of Six Large Language Models Found Big Differences in Responses to Clinical Scenarios

Experts at Penn LDI Panel Call for Rapid Training of Students and Faculty